Adobe Experience Manager Load Testing with JMeter and BlazeMeter

Load testing your new Adobe Experience Manager (AEM) website is a crucial step. In fact, for Adobe Managed Services (AMS) customers it’s required before new sites can be released to the public. Adobe wants to make sure any custom code that was developed doesn’t have memory leaks or other negative effects which only become apparent under sustained load.

This short guide walks through some key aspects to consider as well as how to handle some special considerations around user-generated content and load testing content behind a login.

Note that I’m specifically covering the end-user facing site here and not load testing for the authoring environment. If you’re interested in that aspect, check out Adobe’s docs and white paper.

Pre-requisites

Before embarking on your load testing journey, make sure you have the following details covered:

The production content (or as close as you can get it) has been published to an environment that you can run tests against.

The environment you’re testing on has the latest code deployed to it and matches, as much as possible, the code that you want to go live with. That means this should include all your Dispatcher caching configs as well as any CDN setup. If you’re looking for some guidance and quick wins on performance improvements, check out my blog post on the topic.

If you’re testing in a non-Production environment, make sure the specs match what you’re going to have in Production later on. If you’re using AMS, this should already be the case.

Make sure the website you’re load testing is either available publicly or you’ve allowed the BlazeMeter IP addresses to access it.

Set Testing Goals

Before you can run any meaningful tests, make sure you’re clear about your goals. To start, gather some historical analytics information, such as:

- How many page views & visits are you currently getting per day/week/month?

- How many page views & visits are you expecting to get per day/week/month?

- How many page views & visits are you getting at peak times, for example after an email campaign? How much are you expecting these peaks to increase with the new site launch?

- What is the average & peak duration for a page visit?

These numbers will help you to come up with a plan for how to configure your load test.

One of the things that may be confusing is how to determine the number of "concurrent users." How do page views and visits translate to a number of "concurrent users" that you can plug into a load testing script? Here’s a formula for a quick approximation:

Concurrent users = Peak hourly visits x Average visit duration (in minutes)/60

For example, if your analytics data is showing you have 2,000 peak hourly visits and they’re staying for an average of 2 minutes, you’d end up with 66 concurrent users. Keep in mind that this is only an approximation and you’ll also want to take your expected growth into consideration when putting your test script together.

Next, you’ll want to put some thought into what your testing scenarios will be. Are your visitors only passively browsing a marketing site or do you have interactivity on the site (e.g. contact forms, comments, etc.)? You may also want to test individual endpoints if you’re exposing APIs of some sort, or one-off resources like a sitemap.xml are being consumed by third parties.

Define Your Tools

There are tons of tools and services out there to do load testing. This blog post specifically talks about Apache JMeter and BlazeMeter. JMeter is widely used, free, and open source. BlazeMeter is a commercial product that supports JMeter test scripts among others.

So, why use a paid service if you can run tests for free with JMeter? In short: convenience. Sure, you can run JMeter tests from your laptop or even spin up a server yourself and run the tests from there, but all of that hassle is taken care of if you’re using a SaaS platform. And let’s face it, if you’re paying for a premium CMS like AEM, you can afford a $150 load test.

For testing API endpoints and individual AEM servlets I’ve successfully used Apache Bench in the past. It’s a command-line tool that’s straightforward to use, so I won’t go over it here.

Create Your Test Scenarios

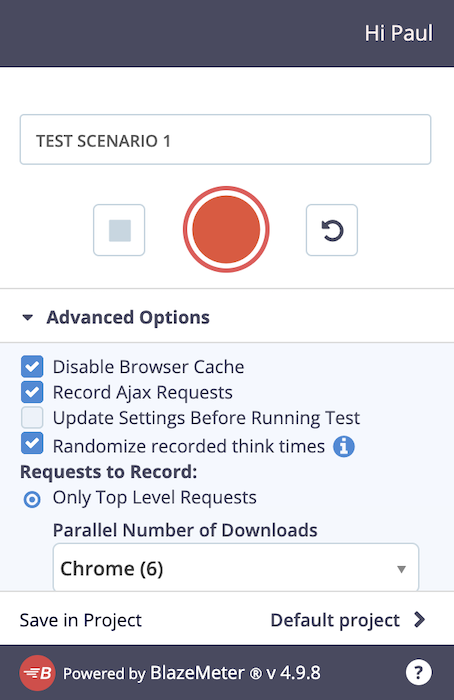

BlazeMeter has a free Chrome extension which "records all of the HTTP/S requests that your browser sends, creates a JMeter or Selenium script, and uploads it to Blazemeter, where you can execute it with a single click." You can use this extension even if you’re not planning to use BlazeMeter’s paid service. It takes all the trouble away from creating the JMeter test plan yourself and comes with some best practice pre-sets.

Creating the test is as easy as giving your test scenario a name and then clicking on the big red record button. You may choose to change some of the advanced settings but for most use cases the default should suffice. Once the recording is started, just hit the website you want to test and browse through just like your end-users would—obviously keeping in mind the scenario you want to test. You can pause or stop recording at any point.

When you’re done, the extension also allows you to download the recording as a JMX file which you can open in JMeter. That way, you can see what was recorded in detail and make adjustments as well as handle advanced use cases (see below).

If you’re looking for an alternative to the browser extension method, Gaston Gonzalez’s "Poor Man's Guide to Load Testing AEM," from a few years ago mentions another interesting approach: grabbing the request log from an existing AEM website. This obviously only makes sense if you already have a site running on AEM and if the content structure hasn’t changed between the old version and the new one that you want to load-test.

Once you have your JMX file ready to go, you upload it to the BlazeMeter platform. You then have a chance to tweak the configuration of your test. Some key settings include "Total Users" (i.e. concurrent users that you’ve defined above), duration, and ramp up time. If you have specific regional or international traffic, you can also update the test configuration accordingly so that the test mimics real expected traffic patterns as much as possible.

Consider increasing the concurrency and duration of your tests incrementally, for example, 20 concurrent, then 50, then 100, and so on. If you’re interested in strategies for the "ramp up" value, check out the JMeter Ramp-Up - The Ultimate Guide. At a high level—assuming you’re not specifically running a spike performance test—you’ll want to configure a gradual enough ramp up so your server doesn't get hit all at once with your concurrent users.

Before running your test on BlazeMeter, it may make sense to run it locally on JMeter, with a listener like "View Results Tree" so that you can confirm individual requests are responding as you would expect. Once that’s confirmed, you should also run a series of free "Debug" tests in BlazeMeter to make sure you have all of the errors in the test plan ironed out.

Handling Some Advanced Use Cases

The default recording works for most standard marketing-type websites, but occasionally you may have more difficult scenarios to cover. With JMeter being open-source and widely used, you can find tons of resources online to adjust your test plans as necessary. I’m going to cover a couple of use cases we recently ran into.

CSRF Checks

If you’re running tests against an AEM Author instance or a Publish one that has authentication and user-generated content, you’ll need to deal with AEM’s CSRF checks. Otherwise, all your POST requests are going to fail. Here’s one approach to solving the problem:

Download the recorded test plan as JMX and open it up in JMeter. Confirm you’re seeing some requests to /libs/granite/csrf/token.json in the list of requests.

Add a "JSON Extractor" to those request(s) so that you can extract the CSRF token as a variable.

Next, you have to update the POST requests to make use of the variable. For that, add a "BeanShell PreProcessor" with the following script as shown in the screenshot:

import org.apache.jmeter.protocol.http.control.Header;

sampler.getHeaderManager().add(new

Header("csrf-token",vars.get("csrf-token")));

Thank you Nithin Dasyam and Pedro Toc for helping to figure this out.

Handling Authentication

For websites that require authentication, you may have to do some special configuration in JMeter. Simple use cases with just a single account logging in can be handled by the recording you’ve made above. However, for more realistic tests you’ll want to set it up so that every concurrent user in the test uses different credentials.

You can find a detailed How-To in the BlazeMeter docs but the high-level approach is as follows:

Make sure that the POST request, which authenticates the user, is part of your recorded test plan because you’ll need to replace your example credentials with placeholders.

Create a CSV file that contains a list of usernames and passwords. This data will be used by the load test one by one.

Download the recorded test plan as JMX and open it up in JMeter.

Add and configure the "CSV Data Set Config" as described in the docs. Note that the "Filename" doesn’t have a full path here so that it works with BlazeMeter. If you want to test this out locally, you’ll have to point to the CSV file’s actual location. You can see an example in the screenshot below:

Next, update your login POST request to make use of the variables you’ve defined:

Randomize Traffic

With a default test plan or if you unchecked "Randomize recorded think times" in the BlazeMeter chrome extension, JMeter will try to execute all requests as soon as possible right after another without breaks.

This obviously does not mimic real user patterns, so to mitigate this there’s a concept of "timers" in JMeter. They can be added to the test plan to randomize traffic and make it more spread out as it would be in a real-life scenario. The Blazemeter docs have a pretty in-depth explanation of all the timers that are available so I won’t go into the details here.

Thank you Nithin Dasyam for this tip!

Running Your Test

Before starting the load test, make sure you inform all relevant parties, for example, your hosting provider (AMS, AWS, etc.) as well as any third-party providers you are integrating with. They may have to turn off alerts on their end or at least be aware so that they’re not surprised by sudden spikes in traffic.

As the test is being executed, it’s a good idea to keep an eye on your AEM and Dispatcher logs to make sure you’re not seeing anything out of the ordinary. Especially with long-running tests, you’ll want to keep an eye out for OutOfMemory exceptions and unclosed sessions.

If you’re an AMS customer, you should also have access to dashboards in New Relic which you can monitor during the test.

Understanding Your Results

Once you’ve completed your test(s), it’s time to make sense of the data.

The first thing you’ll want to check is the error rate. For example, if one of the advanced scenarios like authentication applies to you, make sure you’re not seeing a lot of 403 Forbidden errors for the CSRF checks.

Next, take a look at the response rates. You may check the average response time for a quick idea but ultimately the 90 percent, 95 percent, or 98 percent response times may be more meaningful because the averages can be heavily skewed by outliers.

One thing we’ve seen a lot is that the beginning of tests shows a lot slower response times and it normalizes after the first few minutes. If you’re seeing a pattern like that, it may also be a sign that you need to increase your ramp-up time so not all concurrent users hit your server at exactly the same time.

The other thing to note is that HTML page requests include all associated resources like images, JavaScript, fonts, and CSS. That is by design. So if you’re alarmed like I was by slow requests for plain old pages, that may be the reason and this is expected.

Critical Process

As a critical process for final steps before publishing your AEM website, running basic load tests is not as scary as it seems. Using a browser extension to record a test session and leveraging a SaaS platform that handles all the logistics of the actual test dramatically lowers the barrier. I hope this article has made the topic a little bit less intimidating for you.